Security teams and engineering leaders are having the same conversation at a lot of enterprises right now.

The business wants AI in the product. Legal wants to know where the data goes. The CTO wants to know if the infrastructure will hold under real load. And everyone wants to know who’s responsible when something goes wrong.

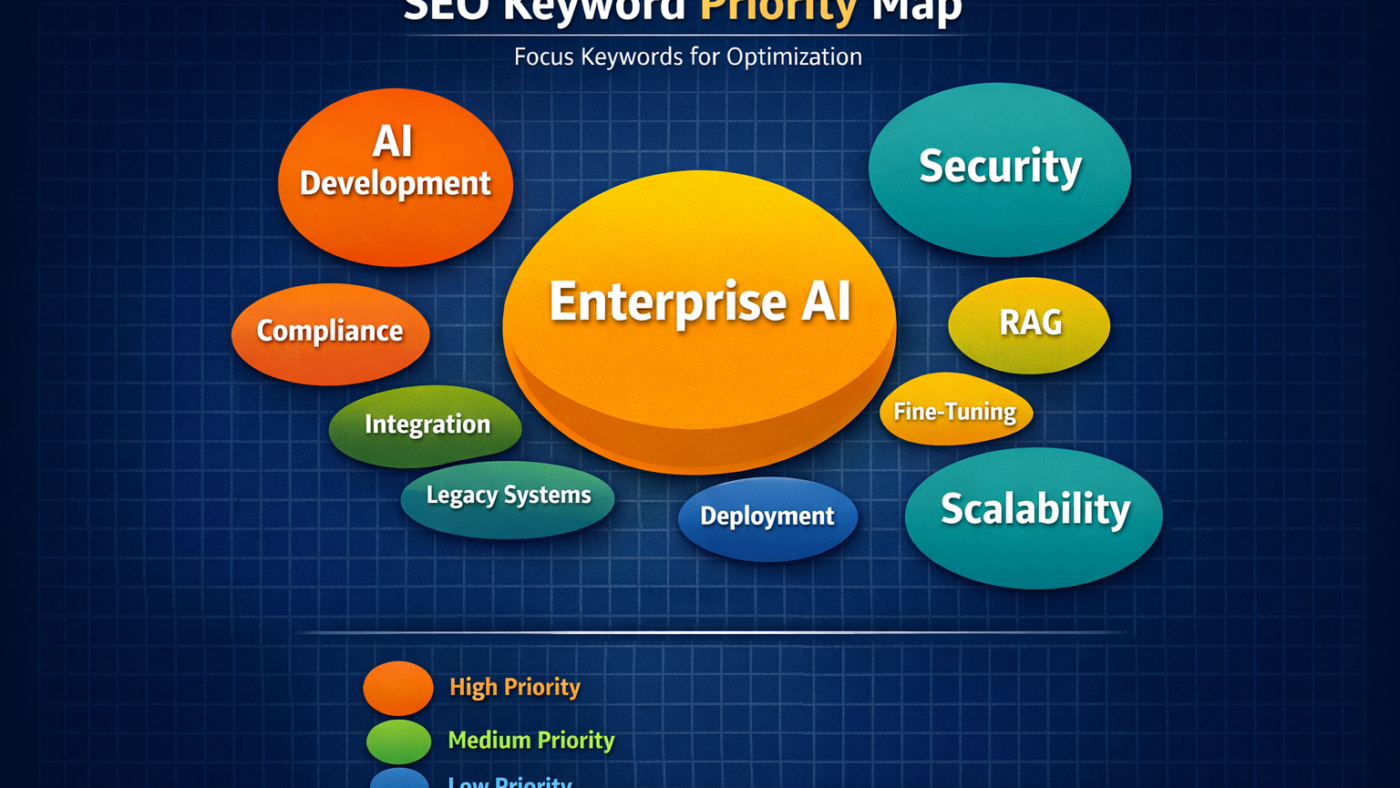

These aren’t reasons to slow down AI adoption. They’re reasons to build it correctly from the start. This guide covers what enterprise-grade AI development actually requires, where security and scalability decisions get made, and how organizations can move fast without creating problems they’ll spend the next two years fixing.

Why Enterprise AI Builds Are Different From Everything Else

Consumer AI applications and enterprise AI applications share the same underlying models. The similarities end there.

Enterprise deployments sit inside regulated industries, complex legacy infrastructure, and organizations where a single bad output can carry legal, financial, or reputational consequences. The stakes on architecture decisions are higher. The margin for getting it wrong is smaller.

A startup shipping an AI writing tool can iterate quickly in public. A bank deploying AI to handle customer account inquiries cannot afford to learn from production failures. The build philosophy is fundamentally different, and the development partners required are different too.

Enterprises need AI systems that are accurate under production load, auditable for compliance purposes, restricted by role-based access controls, and monitored continuously after deployment. Building all of that takes a different kind of development engagement than most early-stage AI projects require.

The Security Architecture That Can’t Be Skipped

Data security is where most enterprise AI projects get slowed down, usually because the security requirements weren’t scoped into the build from day one.

The core issue is data exposure. AI systems trained on or connected to enterprise data need strict boundaries around what gets accessed, by whom, and under what conditions. Enterprise-grade AI deployments require granular, fully scalable data access control mechanisms, including real-time access control lists, role-based permissions, and comprehensive audit trails as prerequisites for production deployment.

Without these controls, an AI system that works perfectly from a functionality standpoint can still represent a compliance failure. In financial services, healthcare, and legal sectors, that failure has regulatory consequences.

The practical checklist for security architecture in enterprise AI builds includes: data isolation between tenants in multi-tenant deployments, end-to-end encryption for data in transit and at rest, audit logging for every query and output, role-based access that mirrors existing organizational permissions, and output validation that catches sensitive data appearing in generated responses.

Any AI development partner who treats these as post-launch additions is asking you to take on risk that belongs in the build phase.

Where AI-Powered Text Generation Fits Into Enterprise Applications

AI-powered text generation is one of the highest-adoption use cases in enterprise AI right now, and one of the highest-risk if implemented without proper controls.

The volume case is straightforward. AI text generators are cutting content creation time by up to 70% across marketing, operations, and communications functions. For enterprises producing documentation, compliance reports, customer communications, and product content at scale, that efficiency gain translates directly to cost reduction and output consistency.

The risk is equally straightforward. AI-powered text generation connected to proprietary systems can surface confidential data in outputs if guardrails aren’t built in. It can produce factually incorrect content at high volume if the underlying model lacks grounding in verified enterprise data. And it can create compliance exposure in regulated industries if outputs aren’t validated before reaching customers or external stakeholders.

Getting the infrastructure right means implementing retrieval-augmented generation (RAG) to ground outputs in current, verified enterprise data rather than static training data alone. Unlike generative AI powered by pre-trained models that generate answers based on static training data, RAG grounds responses in real-time, curated, proprietary information, enabling enterprises to build systems that are compliant, secure, and scalable.

Scalability Is an Architecture Decision, Not a Deployment Decision

A lot of enterprise AI systems work fine in testing and fail under real user load. This is almost always an architecture problem, not a capacity problem.

Scalability has to be designed into the system before deployment, through horizontal scaling capabilities, efficient model serving infrastructure, caching strategies for repeated query patterns, and load balancing across inference endpoints. Retrofitting scalability after a system is live is expensive and disruptive.

Scalability ensures an AI system can handle increasing workloads as the project grows, and enterprises should prioritize solutions with proven reliability under high-demand conditions, looking at metrics like inference time and resource utilization to assess performance.

For enterprises running AI across multiple business units or geographies, the scalability question extends to multi-region deployment. Latency for users in different locations, data residency requirements in different regulatory environments, and consistent performance across varying infrastructure conditions all need to be addressed in the architecture design, not discovered during rollout.

The RAG vs. Fine-Tuning Decision Most Enterprises Get Wrong

When building enterprise AI applications that require domain-specific knowledge, most development teams face a choice between fine-tuning a model on proprietary data or implementing a RAG architecture. The choice has significant implications for both security and scalability.

Fine-tuning bakes enterprise knowledge into the model weights. It produces strong performance on well-defined tasks with stable datasets. The problems emerge over time. Fine-tuning comes with high maintenance costs, slower adaptability to new information, and potential data privacy concerns that hinder its practicality in enterprise environments. Every time the underlying information changes, the model needs retraining. That’s operationally expensive and creates windows where the deployed model is working from outdated information.

RAG architectures retrieve information at query time from live knowledge bases, meaning outputs stay current without retraining. For enterprises where data changes frequently, regulations update, or product information evolves continuously, RAG is operationally more practical and significantly easier to maintain.

The right answer depends on the specific use case. A customer support system where product knowledge changes weekly benefits from RAG. A specialized classification system working on a stable dataset may benefit from fine-tuning. Development teams that default to one approach regardless of context are optimizing for their workflow, not yours.

Integration With Legacy Systems Is Where Timelines Slip

Every enterprise AI implementation eventually confronts the legacy system problem.

Existing ERP platforms, CRM systems, data warehouses, and internal tools weren’t built to connect to AI inference pipelines. Bridging that gap requires API development, data pipeline work, authentication integration, and often significant data cleaning before any AI model can make useful inferences from enterprise data.

This is one of the most underestimated costs in enterprise AI projects. Technical teams that scope the AI build carefully often underscope the integration work. The result is a deployment that runs months behind schedule because the AI system was ready before the data it needed was accessible.

Development partners with real enterprise integration experience scope this work separately and realistically. They identify which legacy systems need to be touched, what data transformation is required, and what the integration timeline looks like before the first line of AI development code is written.

Governance and Compliance Can’t Be Bolted On Later

The regulatory environment around enterprise AI is tightening consistently. Compliance with GDPR, CCPA, and other regional regulations is becoming increasingly critical as regulatory scrutiny regarding data privacy, bias mitigation, and the potential misuse of generated content shapes market developments.

For enterprise leaders, this means AI governance needs to be part of the build, not a separate workstream that gets addressed after the system is live.

Governance in practice means: documented model cards that describe what each AI system does and what it was trained on, output monitoring that flags responses outside defined parameters, human review workflows for high-stakes outputs, clear escalation paths when the system encounters edge cases, and regular audits against compliance benchmarks.

Industries with specific regulatory frameworks, financial services under SEC and FINRA guidelines, healthcare under HIPAA, and European enterprises under the EU AI Act, have additional requirements on top of general data protection standards. Building without accounting for these creates expensive retrofits and potential regulatory exposure.

Choosing the Right AI Development Partner for Enterprise Builds

The technical requirements for enterprise AI are specific enough that partner selection matters more than most technology procurement decisions.

The questions worth asking go beyond technical capabilities. Ask how many production enterprise AI deployments a partner has completed, not pilots. Ask specifically about security architecture experience and whether they have worked in your regulatory environment. Ask what their model monitoring and post-deployment support model looks like. Ask who owns the code, the models, and the data pipelines at the end of the engagement.

A partner who answers these questions specifically and in detail is one who has navigated them before. One who responds with generalities is one who probably hasn’t.

Devsinc works with enterprise clients on AI development engagements that cover the full build scope, from architecture design and security implementation through integration, deployment, and post-launch monitoring. If you’re planning an enterprise AI project and want to understand what a properly scoped build looks like, their team is worth talking to.

Build It Once, Build It Right

The cost of rebuilding an enterprise AI system that wasn’t architected for security and scale from the start is significantly higher than building it correctly the first time.

The AI text generation software market is projected to grow at a CAGR of 22.49% through 2033, and enterprise adoption is accelerating across every sector. The organizations that establish solid AI infrastructure now will be building on a working foundation as capabilities expand. The ones that take shortcuts will be paying to fix them.

Security, scalability, governance, and integration aren’t constraints on enterprise AI development. They’re the conditions under which enterprise AI actually works in the real world.

The question for engineering leaders isn’t whether to build AI into your product stack. It’s whether the foundation you build it on will hold when it matters.

Want to know about IT Equipment Rental for Events in Dubai: A Smarter Way to Power Temporary Setups Check out our Blog category.